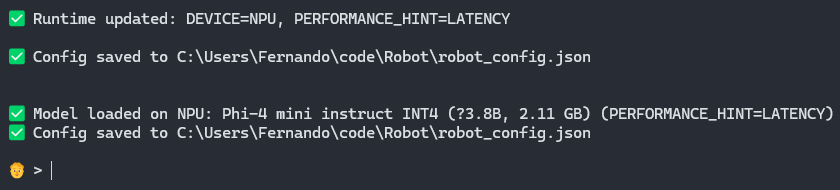

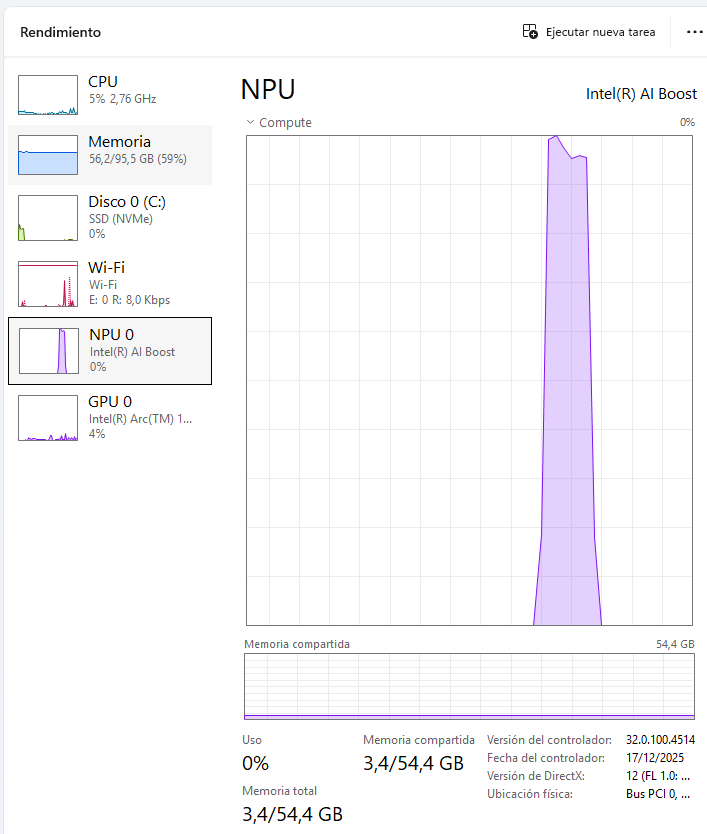

LLM Runtime

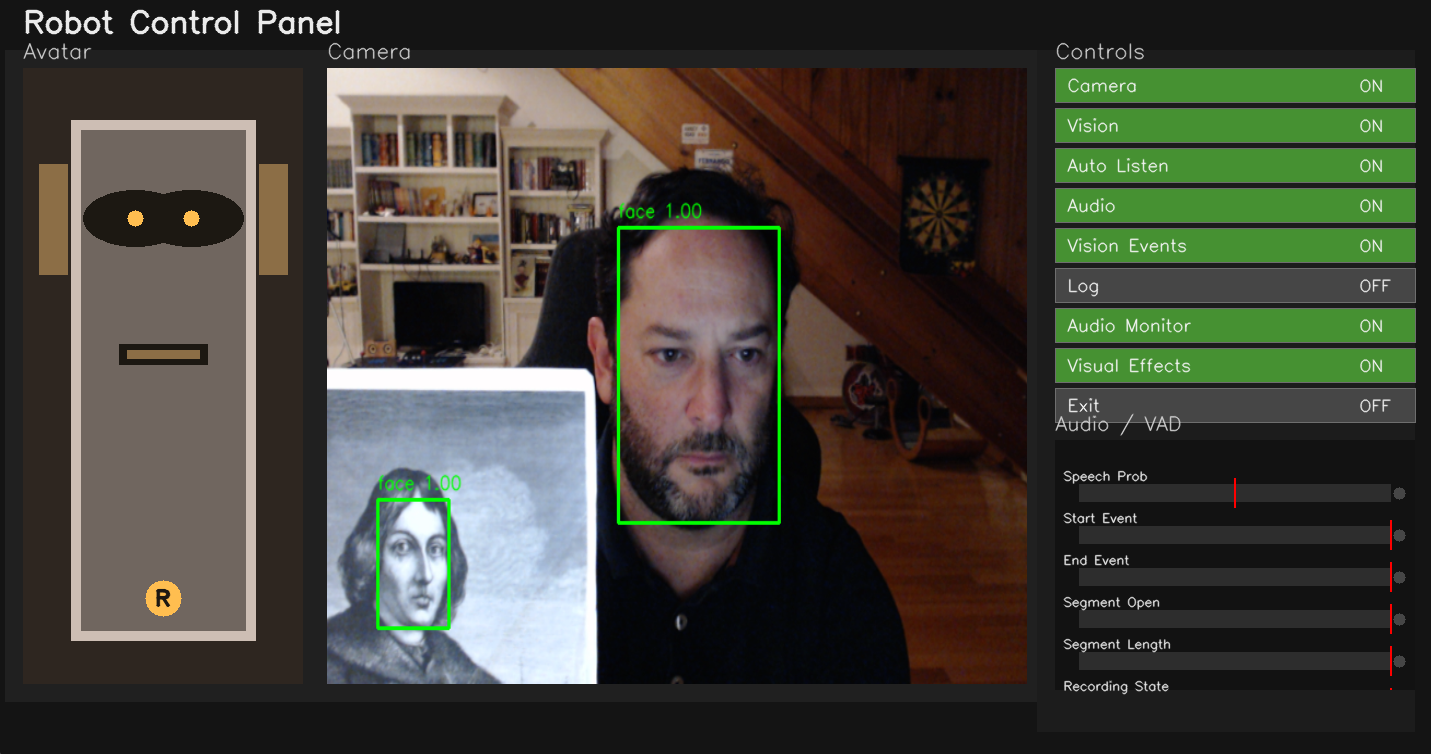

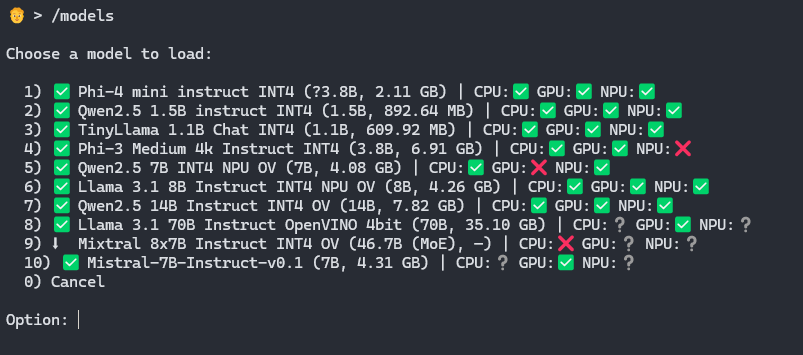

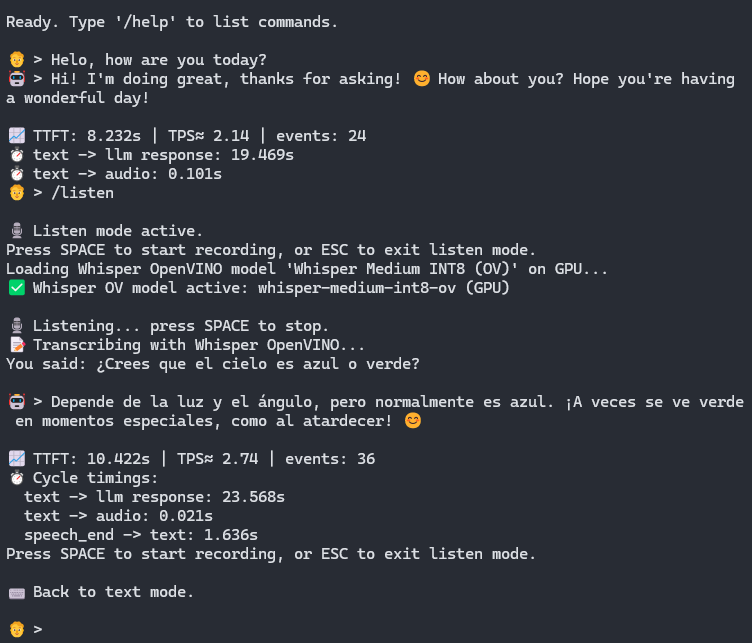

Local LLM chat through OpenVINO GenAI on CPU, GPU, NPU, or AUTO, plus external OpenAI-compatible backends.

Speech Stack

Classic Whisper and OpenVINO Whisper STT, Whisper preload on startup, and continuous auto-listen with Silero VAD.

TTS Options

Windows SAPI, Parler, OpenVINO, Kokoro, BabelVox, and eSpeak NG with optional streaming while the LLM is generating.

Vision Events

OpenVINO face detection, presence-aware behavior, throttled logging, and optional headless camera processing.

Developer Tools

Benchmarking, compatibility tracking, JSON catalogs under ~/ov_models, and an OpenAI-compatible local server.

Platform Support

Windows and Linux with OS-specific dependency files, install scripts, and adaptive backend behavior.